“Doing Nothing with AI 1.0” is a robotic installation in which an industrial robotic arm is guided by brainwave measurements and a generative machine learning model, to optimise its movement choreography with a single aim, to make its audience do nothing.

In times of constant busyness, technological overload, and the demand for permanent receptivity to information, doing nothing is not much accepted, often seen as provocative and associated with wasting time. People seem to always be in a rush, stuffing their calendars, seeking distraction and the subjective feeling of control, unable to tolerate even short periods of inactivity.

The multidisciplinary project “Doing Nothing with AI” intends to address the common misconception of confusing busyness with productivity or even effectiveness. Taking a closer look, there is not too much substance in checking our emails every ten minutes or doing some unfocused screen scrolling whenever there is a five-minute wait at the subway station. Enjoying a moment of inaction and introspection while letting our minds wander and daydream may be more productive than constantly keeping us busy with doing something.

When a visitor puts on the EEG headband, the installation generates a choreography based on data collected from previous encounters. Every 30 seconds, it evaluates whether the current movement has brought the visitor closer to a measurable state of doing nothing, the activation of the Default Mode Network, an unconscious brain state associated with introspection and non-task-related thought. If it has, the choreography is saved and only slowly adapted. If it hasn’t, a contrasting new one is generated. The installation learns, visitor by visitor, what doing nothing might look like for each individual.

None of the interacting entities controls the other. Both are acting, perceiving, learning, and reacting in a setting where the adaptive aesthetic is always in flux, never an optimized result, but an ongoing negotiation.

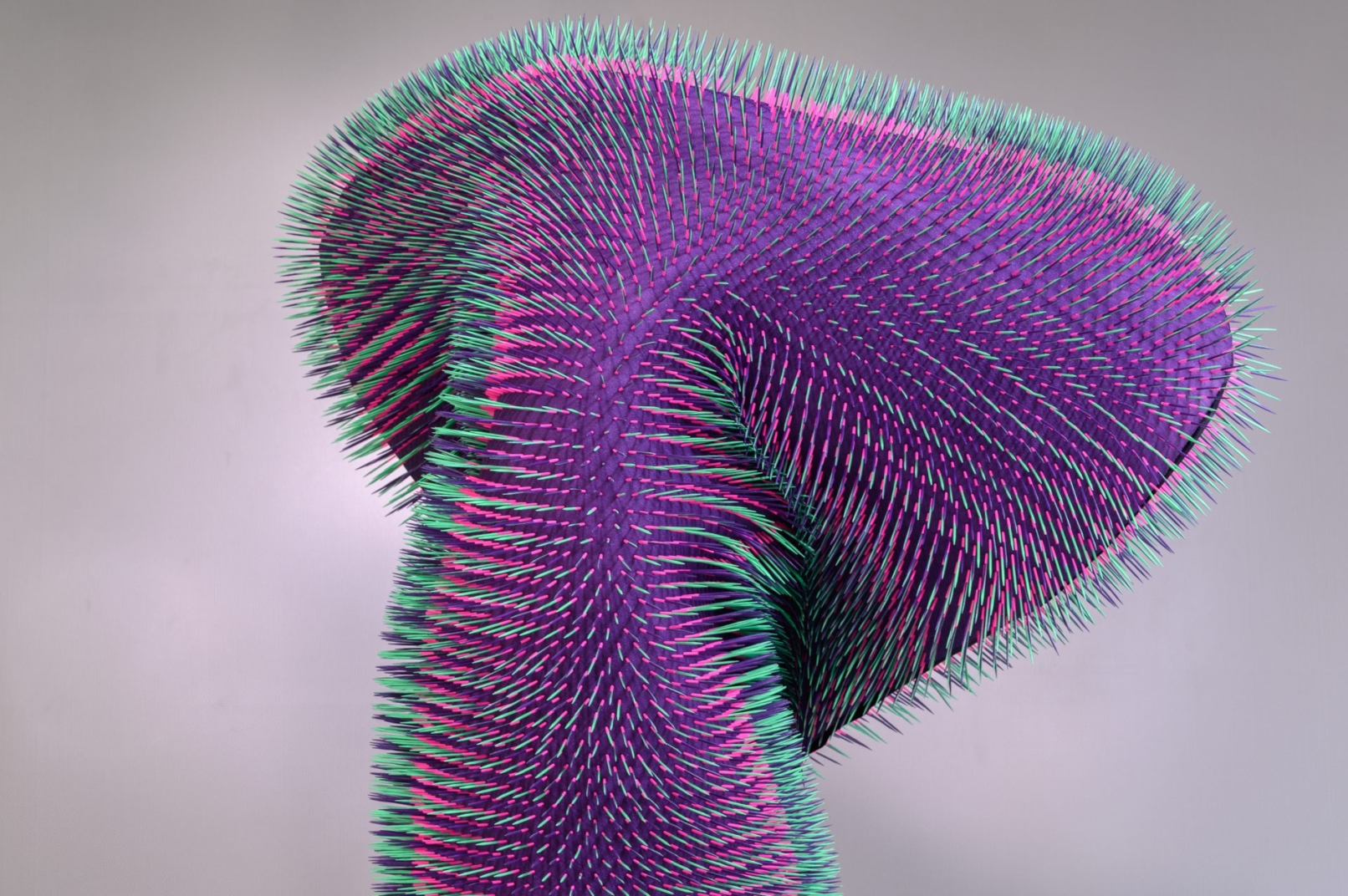

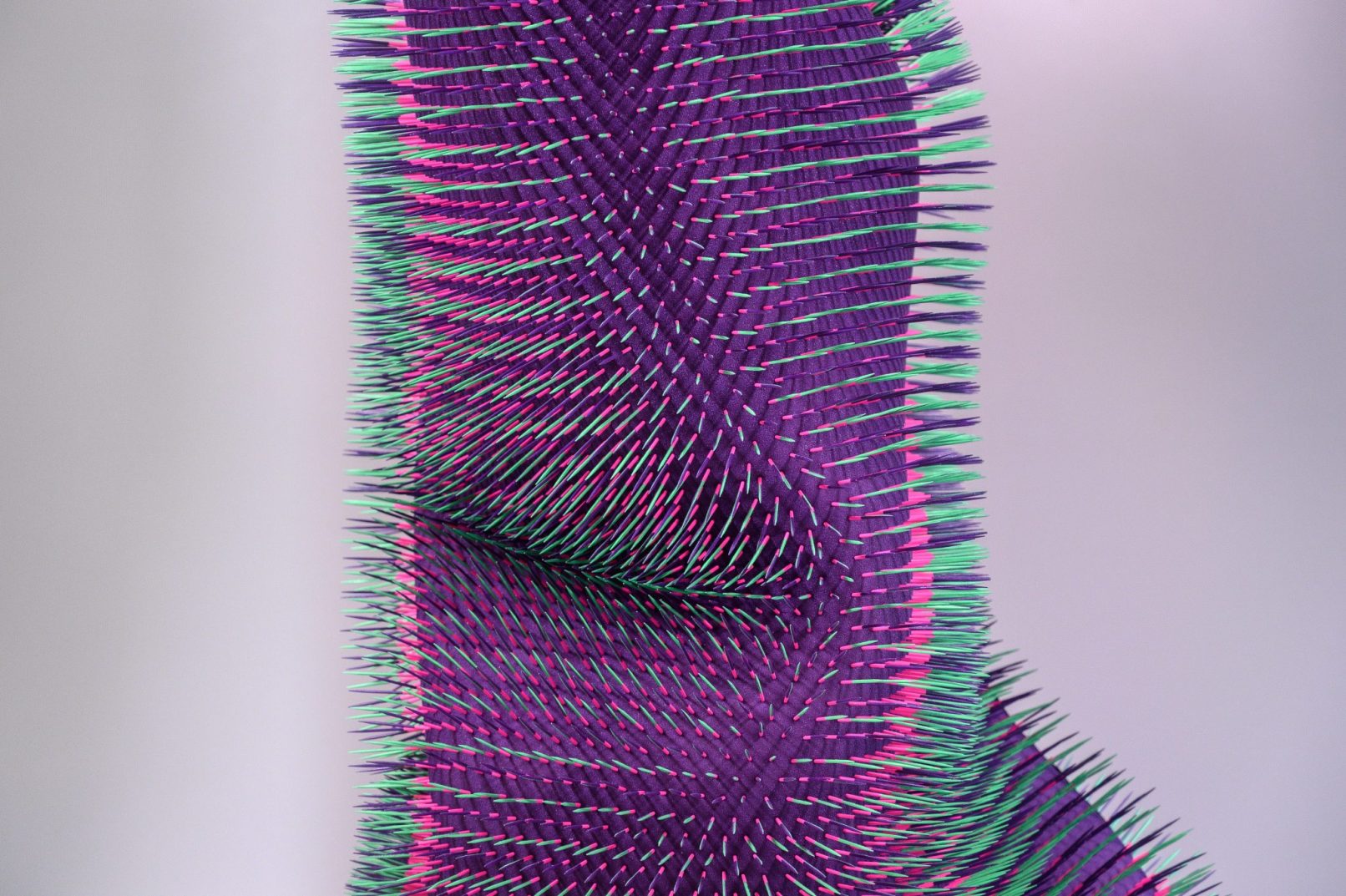

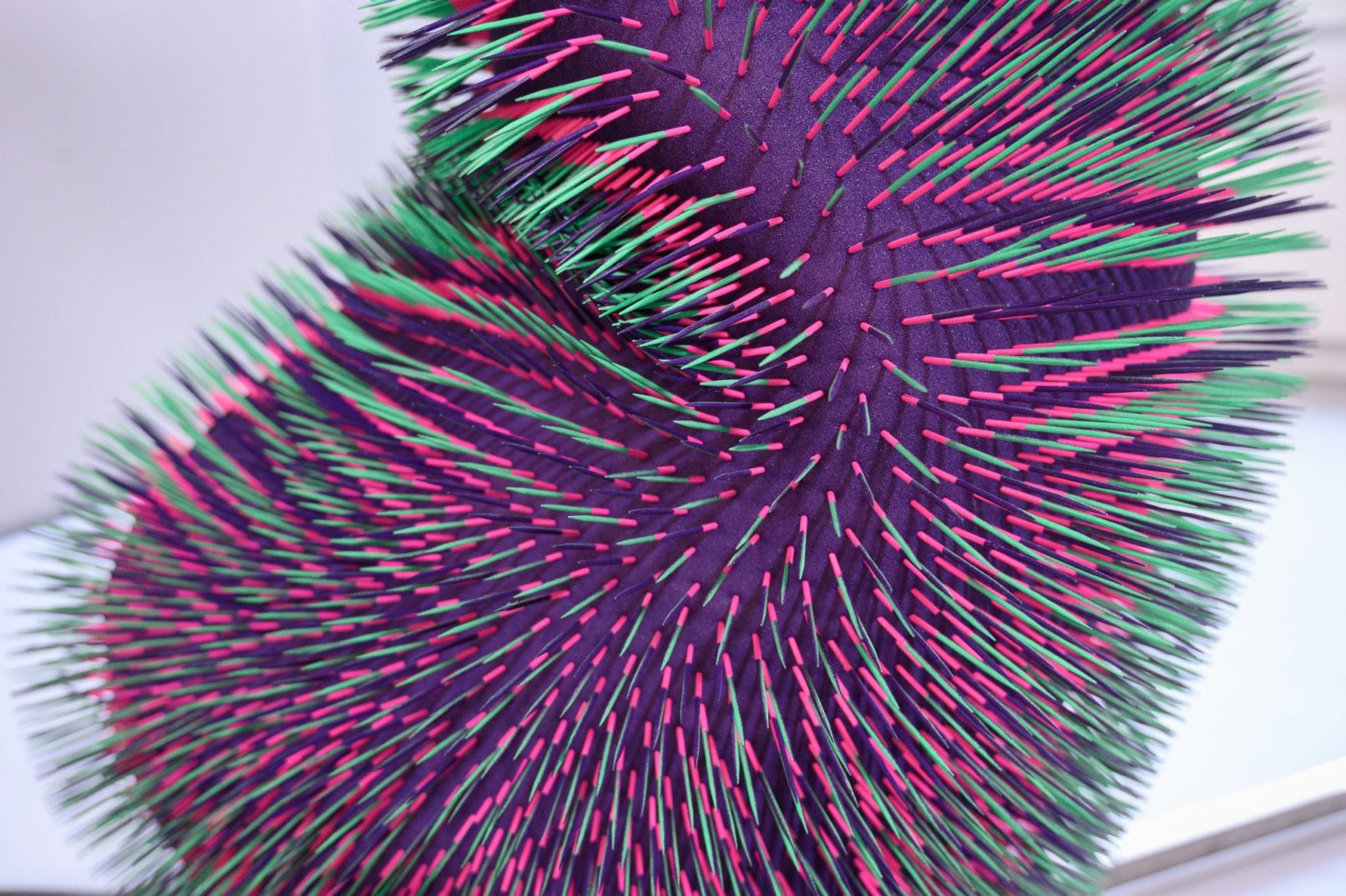

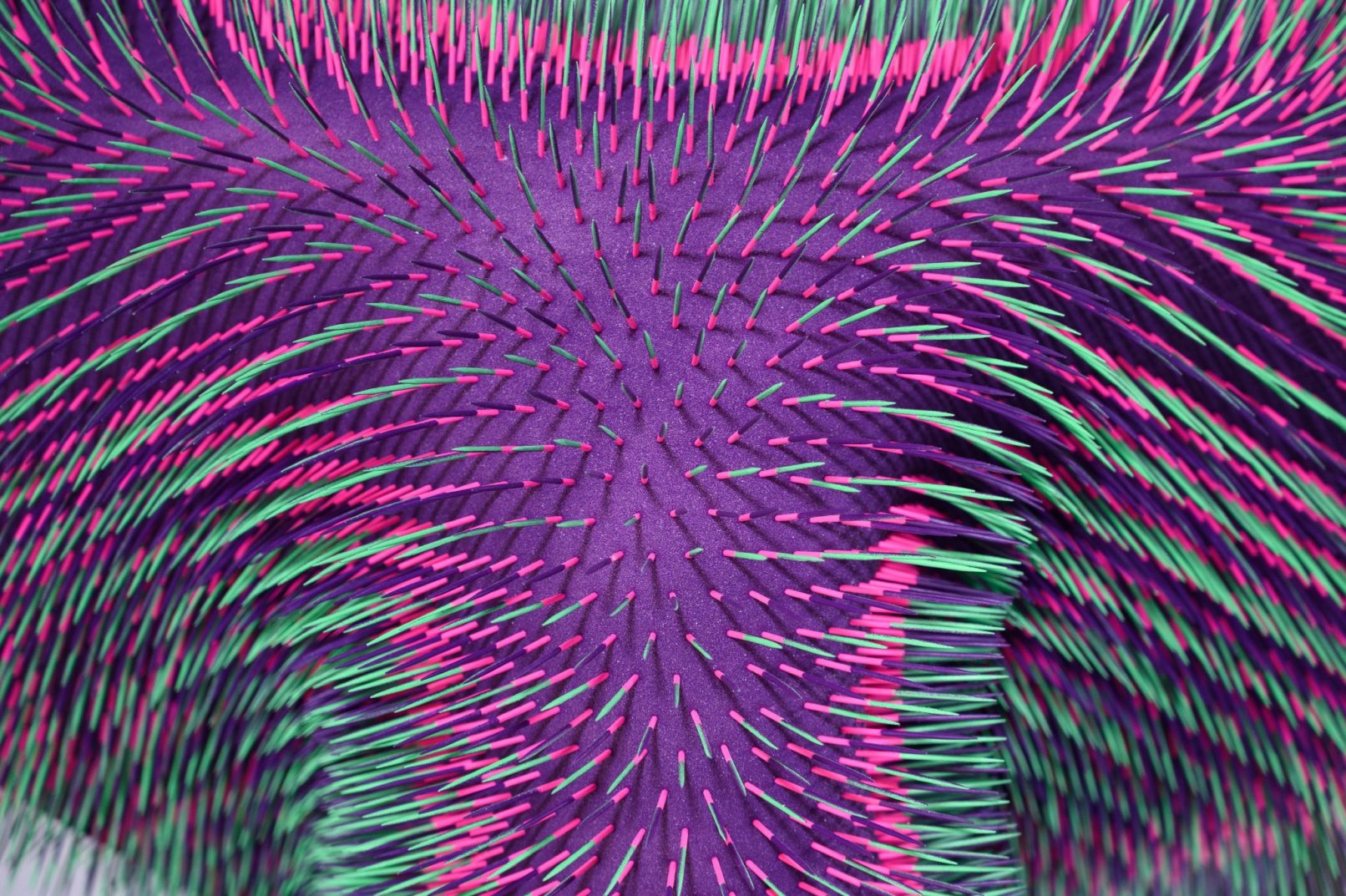

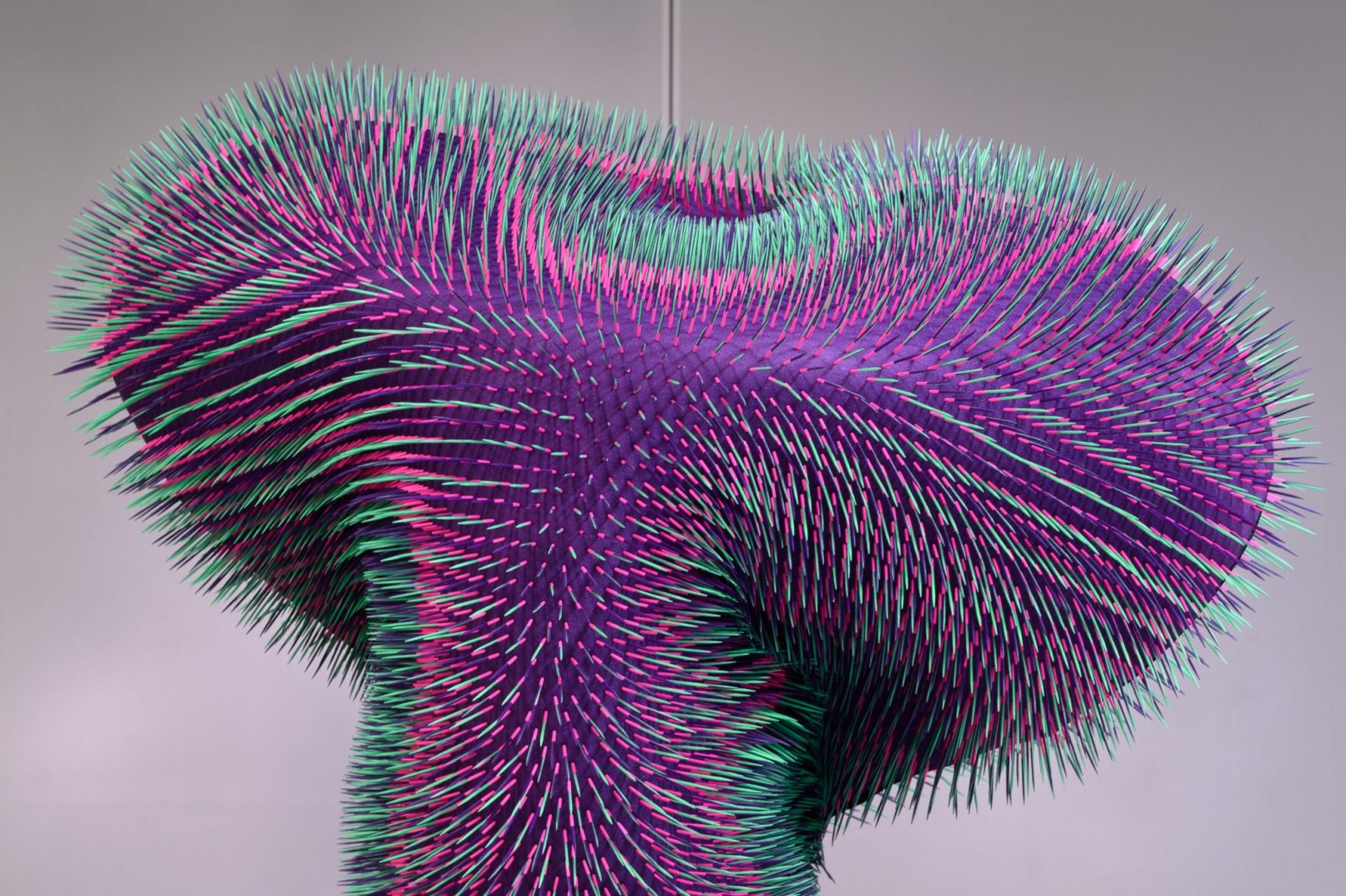

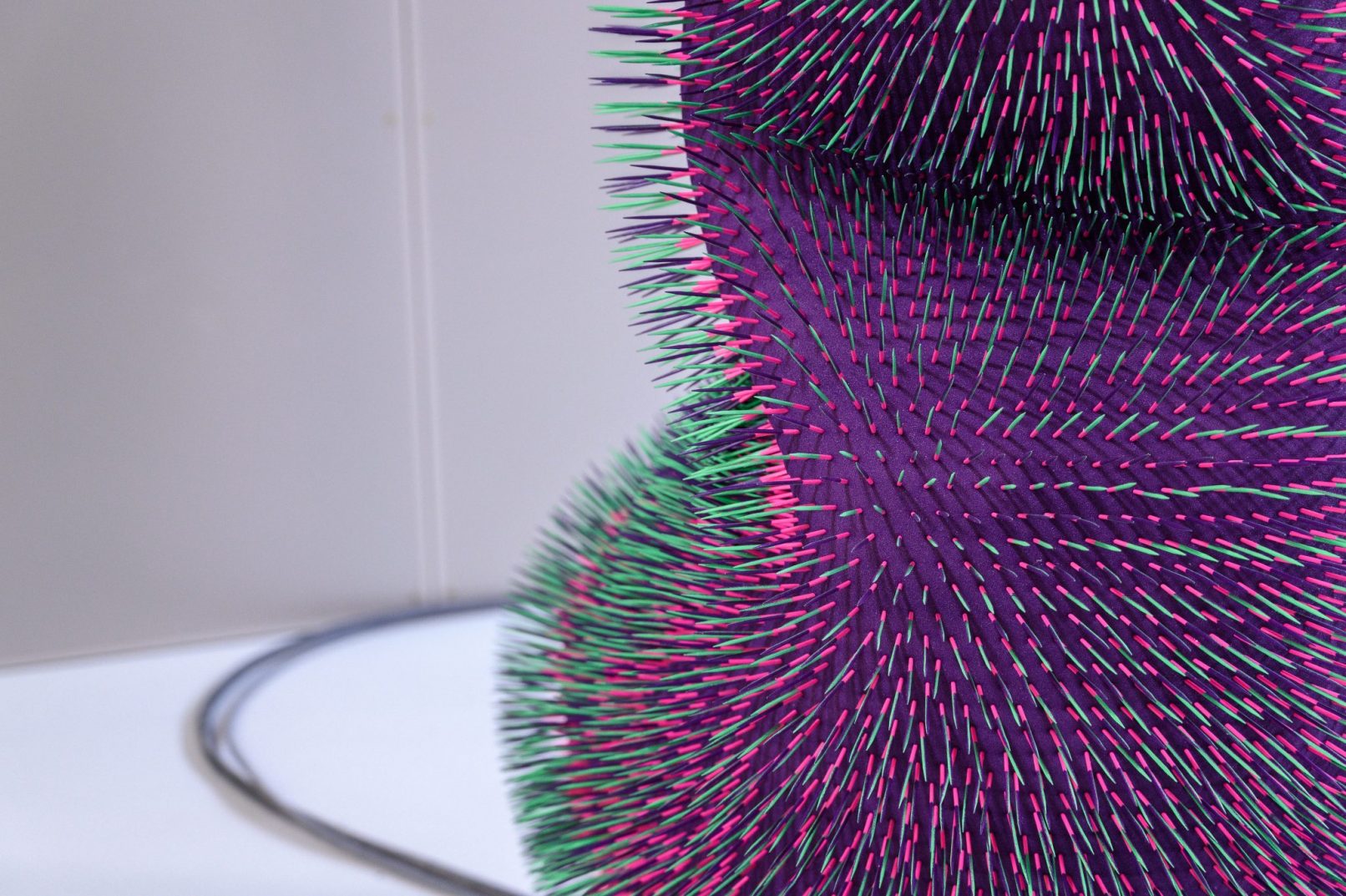

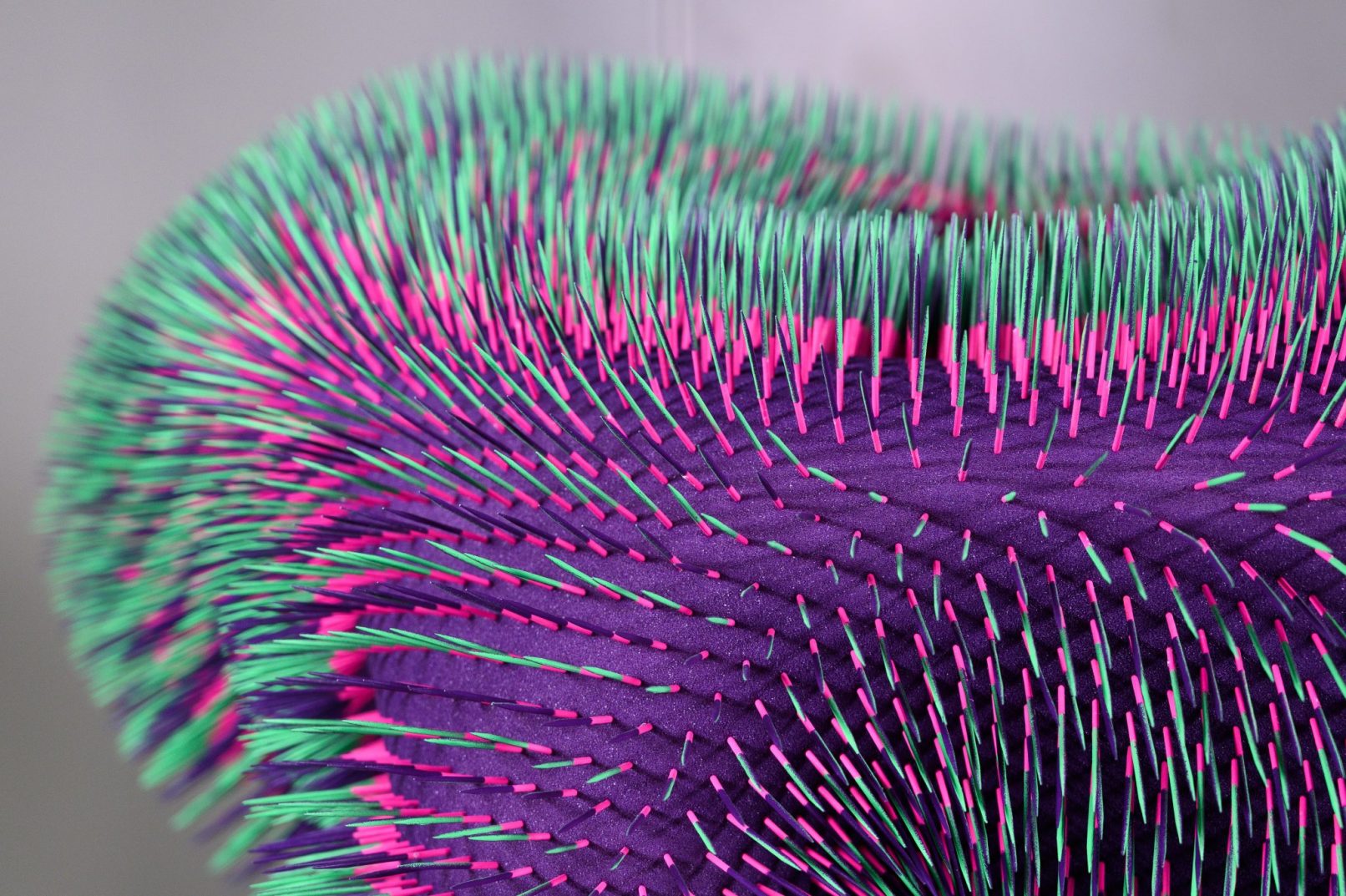

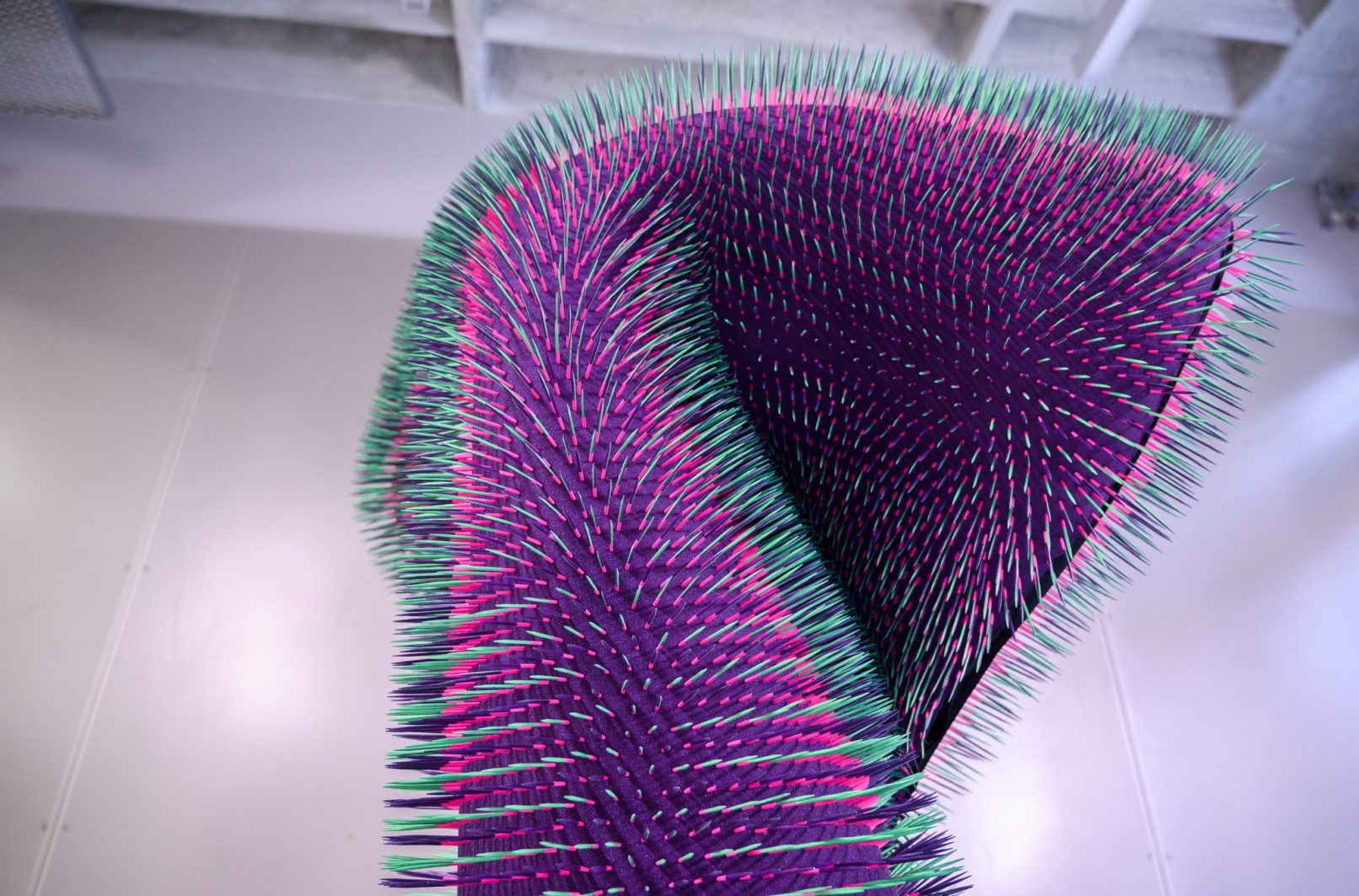

The robot’s skin, fabricated from 23,000 toothpicks placed by the robot itself, amplifies every micro-movement into a rippling, tactile surface. A material trace of the machine’s own labour that precedes and frames the encounter.

More information on the embedded aesthetic human-machine-interaction, the neuroaesthetics topic of bottom-up perception as well as the utilization of EEG-feed generative adversarial networks for interactive art within the different “Doing Nothing with AI” iterations can be found in this open access publication by myself, Magdalena Mayer, and Johannes Braumann: https://dl.acm.org/doi/pdf/10.1145/3430524.3440647

Doing Nothing with AI 1.0 is the first work of the Doing Nothing with AI series, which explores the aesthetics and politics of non-productivity in human-machine encounters. It has been exhibited at the Smithsonian Arts + Industries Building, HEK Basel, ArtScience Museum Singapore, Science Gallery Melbourne, Ars Electronica, and NVIDIA GTC.

Core Team

Emanuel Gollob – design, concept & research

Magdalena May – research

Advice and support

Johannes Braumann – robotic support

Dr Orkan Attila Akgun – neuroscientific support

Magdalena Akantisz & Pia Plankensteiner – Graphic Design

Hardware | KUKA industrial robot | Muse EEG headband | foam | toothpicks

Software | DCGAN | vvvv gamma | Robot Sensor Interface

Hardware 2025 | KUKA industrial robot | Muse EEG headband | foam | toothpicks

Software 2025 | Reinforcement Learning (RL) | vvvv gamma | Robot Sensor Interface

Acknowledgments | Parts of this iteration were produced at the Design Investigations Studio of the University of Applied Arts Vienna

Supported by Vienna Business Agency

References excerpt

Han, Byung-Chul. The burnout society. Stanford University Press, 2020.

Hayles, N. Katherine. “Hyper and deep attention: The generational divide in cognitive modes.” Profession (2007): 187-199.

Busch, Kathrin. P – Passivität. Textem Verlag, Hamburg, und Halle für Kunst, Lüneburg, 2012

Melville, Herman, 1819-1891. Bartleby, the Scrivener : a Story of Wall-Street, 1853.

Neuner, Irene, Jorge Arrubla, Cornelius J. Werner, Konrad Hitz, Frank Boers, Wolfram Kawohl, and N. Jon Shah. “The default mode network and EEG regional spectral power: a simultaneous fMRI-EEG study.” PLoS One 9, no. 2 (2014): e88214.

Raichle, Marcus E. “The brain’s default mode network.” Annual review of neuroscience 38 (2015): 433-447.