Shaky Savine & Doing Nothing with AI

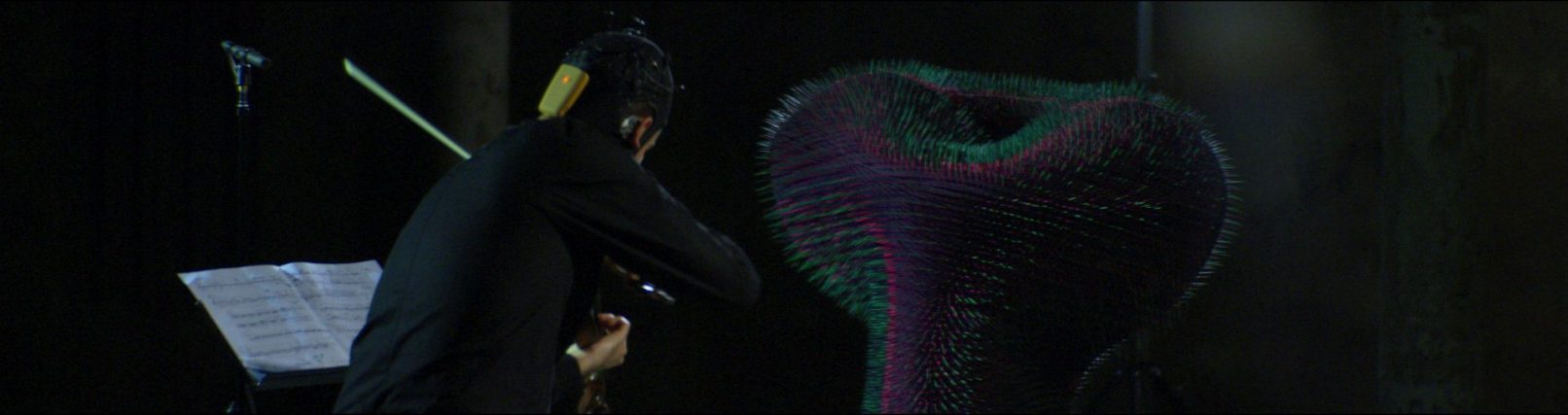

Shaky Savine & Doing Nothing with AI is a live performance in which a solo violinist enters into an embodied duet with the neuroreactive robotic installation Doing Nothing with AI. While improvising the modular violin composition Shaky Savine in response to the robot’s evolving motions, the musician wears an EEG cap that captures their brain activity in real-time.

The installation analyses this neural data to isolate signals from the default mode network, an unconscious, decentralised brain activity associated with introspection and doing nothing. Guided by this input, the robot attempts to learn which movement patterns might reactivate the performer’s resting state. Rather than hearing the music, the robot listens to the performer’s mental rhythms and adapts its movements accordingly, influencing and responding to the performer’s unconscious doing nothing state. Yet, the focused act of improvising the violin composition naturally suppresses the doing nothing brain activity that the embedded AI seeks to elicit. This divergence of objectives becomes the creative engine of the work, generating a dynamic encounter in which human and machine co-compose in real time.

The performance critically explores how AI assemblages reshape our experience of idleness, introspection, and agency. It raises questions about authorship and embodied intention within human–machine collaboration. Rather than seeking seamless alignment, Shaky Savine & Doing Nothing with AI embraces the friction between human and machinic goals as fertile ground to reimagine doing nothing with AI as an active co-negotiation, challenging prevailing notions of productivity and inviting deeper reflection on the future of creative agency.

More information on the embedded aesthetic human-machine-interaction, the neuroaesthetics topic of bottom-up perception, as well as the utilization of EEG-feed generative adversarial networks for interactive art within the different “Doing Nothing with AI” iterations can be found in this open-access publication by myself, Magdalena Mayer, and Johannes Braumann: https://dl.acm.org/doi/pdf/10.1145/3430524.3440647

Music

Armin Sanayei | composition

Jacobo Hernández Enríquez | violin

Alexander Yannilos | recording & sound mastering

Installation

Emanuel Gollob – design, concept & research

Magdalena May – concept & research

Johannes Braumann – robotic advice

Dr. Orkan Attila Akgün – neuroscientific advice

Movie

Anna Mitterer | director & cut

Philipp Windsor-Topolsky | camera & light

Tobias Aschermann | focus puller & DIT & colour grading

Aram Baronian | dolly grip

Hardware | KUKA industrial robot | Enobio EEG Cap | foam | toothpicks

Software | Reinforcement Learning (RL) | vvvv gamma | Robot Sensor Interface

Acknowledgements | Supported by Vienna Business Agency | Parts of this iteration were produced at the Design Investigations Studio of the University of Applied Arts Vienna | The project was realized in a co-production between the Ensemble Reconsil and REAKTOR